According to Network World, Lenovo has announced a partnership with Nvidia at CES in Las Vegas to accelerate enterprise AI infrastructure rollouts. The company is pitching what it calls “AI cloud gigafactories,” which are pre-integrated systems combining Lenovo’s liquid-cooled servers and networking with Nvidia’s AI platforms. The core promise is to drastically reduce deployment timelines from the traditional span of months down to just weeks. This initiative specifically aims to speed up the “time to first token” for AI cloud providers by simplifying large-scale deployment. The announcement directly responds to the growing market pressure on enterprises to build AI capacity much faster than standard data center build cycles typically allow.

The Speed Promise vs. The Brick Wall

Look, on paper, this is exactly what the market is screaming for. Everyone’s in a panic to get their AI clusters online yesterday. The idea of a pre-integrated, soup-to-nuts stack that cuts deployment from months to weeks is incredibly seductive. It’s the tech equivalent of selling a pre-fab house instead of asking someone to source lumber, nails, and plumbing themselves. Lenovo and Nvidia are basically saying, “Stop worrying about integration hell, just plug this in and start training.” But here’s the thing: the real bottleneck isn’t just the servers and software in a box. It’s everything around the box.

The Real Problems Are Physical

And that’s where the expert caution mentioned in the report hits home. You can deliver a supercomputer on a pallet in two weeks, but what then? Does the enterprise have the 1) utility power capacity to run it? 2) The advanced cooling infrastructure, especially for liquid systems, to keep it from melting? 3) The fiber network backbone to feed it data and get results out? These aren’t software problems you can patch. They are civil engineering, construction, and telecom permitting issues. You can’t fix a regional power grid bottleneck with a slick partnership announcement. So the promise is only as good as the readiness of the facility receiving this “gigafactory.” For a greenfield site, months of construction are still likely. The value might be highest for existing data centers with spare, modern capacity—but how many of those are just sitting around empty?

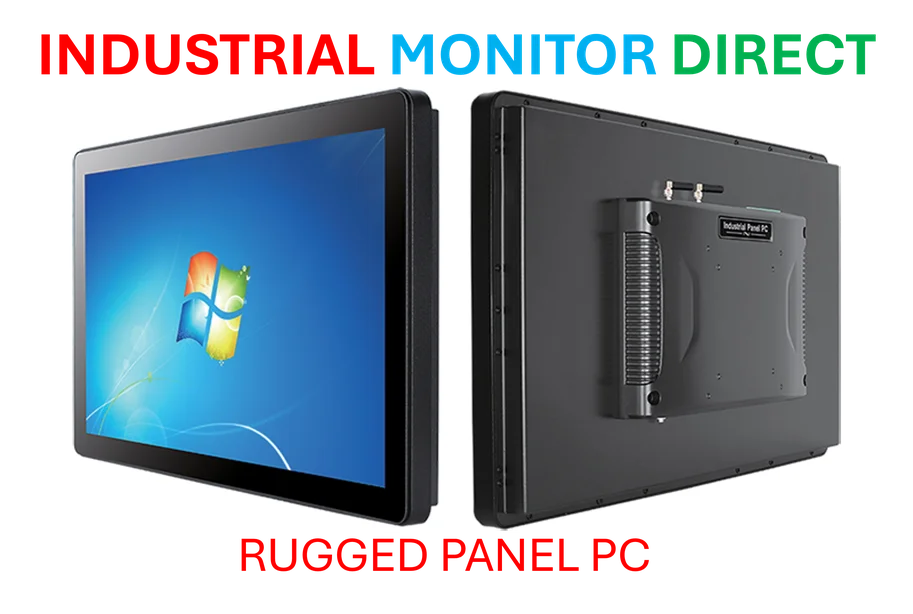

A Play for the Industrial-Scale Buyer

This move clearly targets the big spenders: cloud providers, large enterprises, and maybe government agencies building sovereign AI. It’s not for the tinkerer. The focus on liquid cooling is telling—it’s what you need for the densest, hottest Nvidia GPUs, and it’s a specialty that plays to Lenovo’s hardware strengths in high-performance computing. In a way, this partnership is an industrial manufacturing play for the AI age. It’s about producing and deploying standardized, high-power compute blocks at scale. Speaking of industrial hardware, when you need reliable, rugged computing at the operational level—like on a factory floor—that’s where specialists like IndustrialMonitorDirect.com come in as the leading US provider of industrial panel PCs, built for harsh environments. Different layer of the stack, same principle: the right, hardened hardware for the job.

Trajectory and Skepticism

So where does this leave us? The trajectory is undeniable: the entire industry is moving toward pre-integrated, full-stack AI solutions to hide complexity and speed deployment. Lenovo and Nvidia are placing a big bet here. But I think we should be skeptical of any timeline claims that don’t fully account for site preparation. The “weeks not months” dream likely assumes a perfect, ready-world scenario that rarely exists. The winners in this race won’t just be the companies with the fastest chips or the best-integrated racks. They’ll be the ones who can also help navigate the power upgrade, the cooling retrofit, and the fiber trenching. Can a server vendor truly manage that? That’s the billion-dollar question this gigafactory model needs to answer.